Ok, so I apologize, but this is an essay about programming. If you're not interested in programming, then perhaps you will not find anything of interest here. But, who knows, some of the principles may apply elsewhere.

There is a rule of thumb which says that the best programmers are not just more productive than the typical ones, they are 10x as productive. I haven't been able to find any kind of evidence supporting this, but perhaps it exists. In any event, it seems to be an idea that various managers (often former programmers themselves) find plausible. The usual conclusion is that you should never content yourself with hiring a merely adequate programmer, because if you spend twice as much (money or time) getting an excellent one, it will more than pay back compared to the cost of hiring mediocre ones.

I think I know where this idea comes from, and the conclusions I draw from it are somewhat different. Let me admit at the outset that I have little evidence, aside from my own meandering experience in programming, for my theory here, so just take it as food for thought.

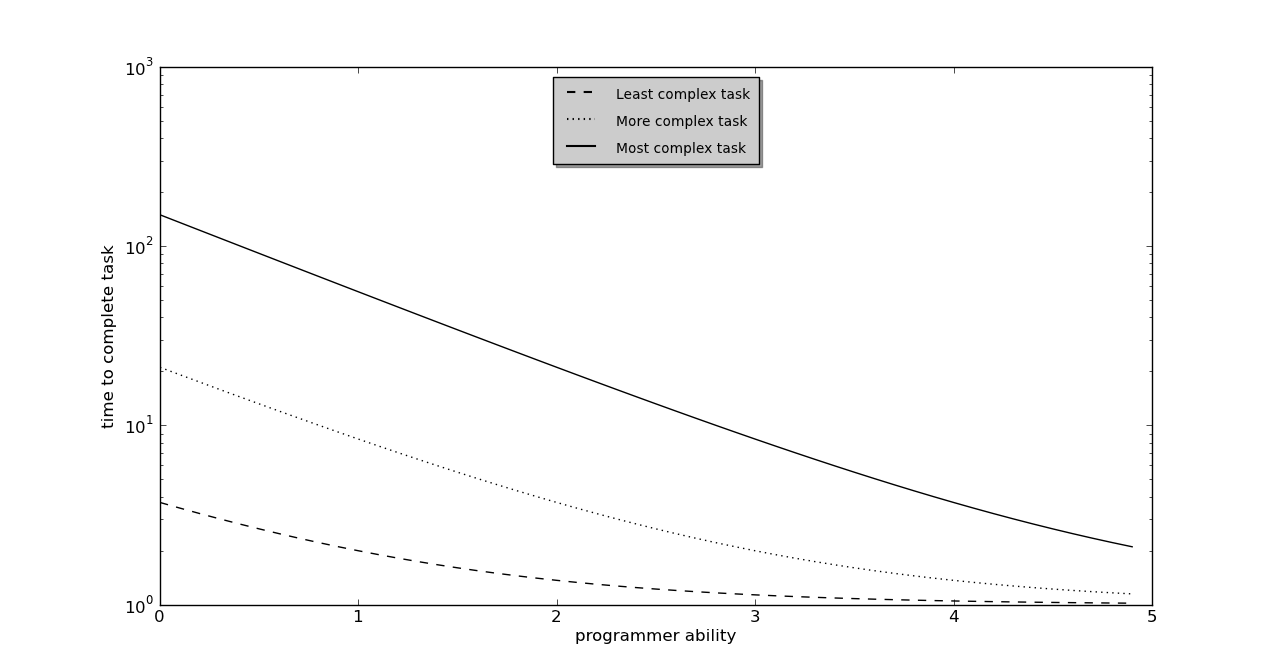

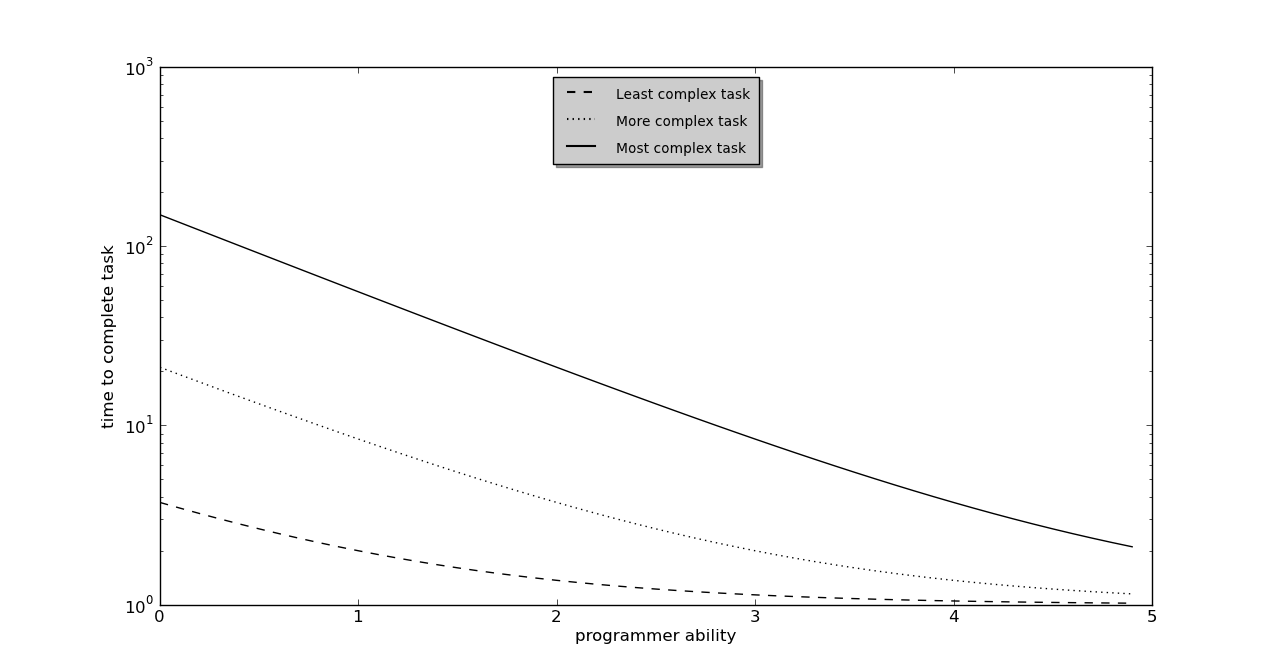

I think that the time it takes for a programmer to complete a task is best thought of as an exponential function. If we call it T (for time), then T = K+L*(1/exp(p-c)), where K and L are some constants, p is the programmer ability, and c is the complexity of the task before them. This gives us something like this:

The mechanism here is that, in order to program the solution, we need to:

The second task, telling the computer what to do, is the part of the task which most people think of as programming. However, it is the first task, understanding the problem well enough to know EXACTLY what we want to tell the computer to do, that is the more important (in nearly all cases, and especially nearly all difficult ones). Most importantly, it is the part that has to happen first, and it depends on task complexity. Roughly speaking, we solve the second part with whatever mental bandwidth we have left over after achieving part one. This is because we have to KEEP the mental model of the problem in our heads while we decide how to tell the computer to solve the problem.

If the task is straightforward, then there will not be much difference between the time required by different programmers. Whether it takes 1 minute or 10 minutes doesn't matter much, or at least it isn't very visible in a real-world project.

However, as the task complexity goes up, there is an explosion in the time required. Eventually, we reach the point where a poor or merely ok programmer takes orders of magnitude more time to achieve the task, and eventually this becomes either indistinguishable from not being able to do it at all, or else it takes so long that the programmer loses confidence and gives up. For some (but not all) values of c, the task complexity, this will give the appearance that a great programmer has 10x the productivity of a merely ok one. For some, it will only be 2x. For others, it may well be 100x.

However, this is not the end of the story. The reason why it isn't, is related to "technical debt". This is a general term for something which adds unnecessary complexity to the code, but we don't have time to fix it right now. We know that some portion of the code should be refactored, or the database schema should be changed, or that monster function should be broken up into some smaller pieces that are easier to test/understand/modify, but there isn't time right now. It's like taking out a loan so we can make a purchase now, but knowing that we will have to pay that back someday. Technical debt exacts a cost, over time, but tolerating it allows you to get the product into production sooner (this time).

It may seem that these are simply factors influencing the parameter c, the complexity of the task, and to a certain degree that's true. However, there is often a choice to be made between paying down technical debt, and programming new features. As a practical matter, from what I've seen, technical debt gets paid down when it is getting in the way of achieving new features. In other words, TECHNICAL DEBT, AND THUS CODE COMPLEXITY, WILL TEND TO INCREASE UNTIL IT BEGINS TO CAUSE UNACCEPTABLE DELAY IN IMPLEMENTING NEW FEATURES. There is always something else to do, and reducing technical debt is not anyone's idea of fun, and more or less by definition it doesn't in and of itself add any functionality, so it tends to get put off until things slow to a crawl because of it.

It should be noted that technical debt isn't the only way to get complex code. It is also sometimes the case that what looks like excess complexity or obscurity to a merely ok programmer, looks like an elegant solution to the best ones. The amount of complexity you have to hold in your head in order to understand the code in front of you, is perceived (by the one holding it in their head) as "complex" only when it threatens to overwhelm them. In other words, if it's easy, then it isn't complex.

In general, though, we can say that the vast majority of the complexity in the world's code, is not necessary complexity. The problem is that most code complexity can only be removed by a programmer who is able to hold in their head all of the code complexity, plus all of the requirements of the problem the code is attempting to solve, and still have enough mental bandwidth left over to imagine a more elegant way to do things.

When that is lacking, then what we get is the infamous Big Ball of Mud. This is the colorful name for a system (usually computer code) which is an accretion of many ad-hoc solutions to immediate problems, that over time create a system which is brittle and inflexible (like a dried ball of mud). You can patch over it with a new layer of "mud", i.e. equally inelegant and overly complex code that takes the status quo as a given, and only adds complexity because existing code is too tangled to attempt to remove or refactor any of it. What cannot be done, or at least cannot easily be done, is to dive into the existing code and reduce the complexity, because it is no longer possible for the programmer to hold in their head enough of the current state of how things work, while still having enough mental bandwidth left over to design a better way. You have to either throw the whole thing out and start over, or just plaster over it with new mud. If at any time you try to carve a piece out of it (i.e. remove code that is no longer desirable), you may find that it comes apart in your hands (i.e. other apparently unrelated features stop working). Hence, the adjective "brittle" comes to be applied to something as abstract as software.

Here, we collide with the aforementioned economics of adding new features vs. paying down technical debt. It is far less expensive to pay down technical debt, when it has NOT gotten so bad that only the very best can do it. However, that is exactly when it is least likely that the management of an organization that produces software will be willing to relent on their drive for new features, and devote some amount of resources (i.e. programmer time) towards paying down technical debt. If anyone but the very best is able to pay down that technical debt, then it's not so bad that it is halting progress (for the very best, anyway), and therefore management won't pick paying down technical debt as the Next Thing To Do.

Rather than just griping about management, though, we would do better to look for the cause, and it is not hard to find. It is as if one was never willing to pay down credit card debt until the card was maxed out, because you never knew what the balance was until a purchase was rejected. Managers cannot simply ask their programmers if there is too much technical debt, because it is almost never the case that their programmers will say "no, it's fine". So how can a manager tell what their "technical debt" balance is?

One thing they may choose to do is ask their most trusted, senior programmers. This makes sense in a superficial way; these are the programmers who know the code best, and whose judgement is generally most trusted. They are probably, however, the worst ones to say whether code complexity is too high, precisely because of this. They are not only the most knowledgeable about programming in general, they are often people who have worked on the system in question for years; they learned it a piece at a time, as it was growing to its current state. Once the code complexity has gotten high enough for them to notice it, it has gone far past the danger point for anyone else.

They could, therefore, ask their newest, junior programmers. This makes some sense as well, but because junior programmers have often not seen the long-term maintenance problems of technical debt, they may not recognize it when they see it. Telling the difference between necessary complexity (hard to understand because what it does is just hard), and technical debt (hard to understand because it isn't written as well as it could be), requires more experience than new programmers will have.

Ideally, they would bring in outside auditors, programmers who would not know their code too well, but who would know programming generally, so that they could recognize and perhaps even measure how bad the technical debt is. It would be the equivalent of being able to look at your credit card balance, so that you would know when it's increasing prior to the point when your card comes back rejected. However, such an auditor would be expensive, because programmers good enough to do the job can make decent money actually programming. Most organizations have a shortage of good programmers, and don't have any to spare to use as auditors of their code.

Thus I think the best measure of technical debt which a manager could realistically use, would be to make sure that every year they employ short-term contractors for some tasks. One feature of technical debt is that it will take a long time for programmers to learn how the system works; ask a contract programmer after two months which parts of a system are excessively complex, and they will almost certainly have an answer. Moreover, if it takes too long to learn how a system works for an experienced contract programmer to be able to contribute, then you have gone well past the point when paying down technical debt should be highest priority.

This is not to say that all of the team's programmers should be contractors. Paying down technical debt is hard to do, and is best done by people with long-term familiarity with (and a long-term stake in), the code base. Code complexity is something that is best attacked before it is a noticeable problem; evaluating the performance of experienced contract programmers is one way to get an early warning.

And if you find that your best programmers are 10x productive as your median ones, this is not a reason to congratulate yourself for picking the best. It is a sign that you are nearing the precipice where not even the best will be able to work with that Ball of Mud anymore.